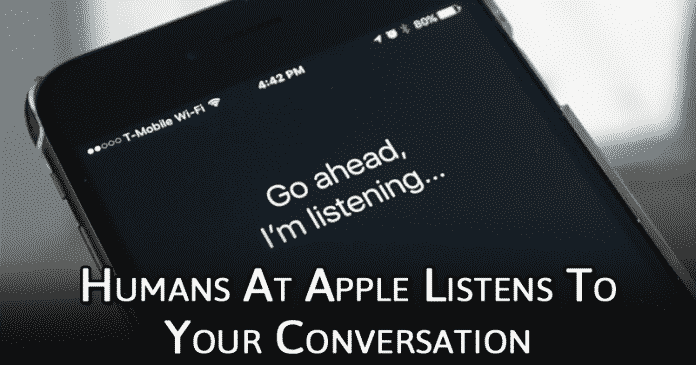

Recently, The Guardian reported that Apple contractors have been listening to your conversation all this while to improve the quality of Siri. The reports claim that Apple’s Siri listens to conversations like the recordings of couples having sex, drug deals, confidential medical information, etc.

Apple’s Siri Listens To People Having Sex, Drug Deals & Medical Information

It’s of no doubt that personal assistant like Siri, Cortana, Alexa, Google Assistant, etc. are making our life more comfortable and fun. However, these voice assistants are not as private as you might think. Back in April 2019, Amazon was revealed to employ staff to listen to the Alexa recordings.

Actually, the company behind those services tend to have employees or contractors to review the voice clips of its users manually. This thing was done to improve the quality of the voice assistant.

Not only that, but even Google workers were found saving user’s recordings to enhance it’s AI assistant skills. The reason why we are talking about such topic is that recently the Guardian reported that Apple contractors have been listening to your conversation all this while to improve the quality of Siri.

The reports claim that Apple’s Siri listens to conversations like the recordings of couples having sex, drug deals, confidential medical information, etc. The conversations were often recorded through Apple devices like Apple Watch and Home Pod.

Although Apple doesn’t clearly exhibit that it sends users’ Siri recordings to its contractors, but they have confirmed that it stores a small portion (around 1%) of the recordings to improve the personal assistant.

Apple said to The Guardian, ‘A small portion of Siri requests are analyzed to improve Siri and dictation. User requests are not associated with the user’s Apple ID. Siri’s responses are analyzed in secure facilities and all reviewers are under the obligation to adhere to Apple’s strict confidentiality requirements.”

An anonymous whistleblower who spoke to The Guardian said that Apple should expressly tell its users that Siri content might be reviewed by a human because the recordings often contain business dealings and sexual encounters.

So, what do you think about this? Share your views with us in the comment box below.